Subjective assessments have long been central to higher education. They allow faculty to evaluate how students reason, structure arguments, and apply concepts beyond predefined answers. In many disciplines, they remain the most reliable way to assess depth of understanding.

At the same time, institutions face increasing pressure to conduct these evaluations at scale—across large cohorts, tight academic schedules, and diverse learner profiles. This has made subjective assessment one of the most demanding components of academic delivery.

The challenge is not the value of subjective assessment, but the sustainability of the process.

The Tension Between Depth and Scale

Subjective evaluation depends on careful reading, interpretation, and judgement. When applied to small groups, this works well. As cohort sizes grow, maintaining consistency, fairness, and timely feedback becomes difficult.

Institutions increasingly observe:

- Variability in evaluation standards across sections

- Delays in feedback due to grading load

- Limited visibility into how marks were arrived at

- Student focus on scores rather than understanding

These challenges are structural, not individual. They reflect the limits of manual processes in a scaled academic environment.

Why Structure Matters in Subjective Evaluation

Subjective assessment does not mean unstructured assessment. Clear criteria and shared standards are essential to preserve academic rigor.

Rubrics provide this structure. They help define what constitutes understanding, application, clarity, and reasoning. They also make evaluation transparent for both faculty and students.

However, creating and applying rubrics consistently across courses and cohorts requires effort. Without support, rubrics risk becoming either too generic or too time-consuming to use.

Subjective Assessments Within Intelligent Learning Infrastructure

Within Intelligent Learning Infrastructure, subjective assessments are supported through rubric-driven, AI-assisted evaluation frameworks. The intent is not to replace academic judgement, but to support it with structure and consistency.

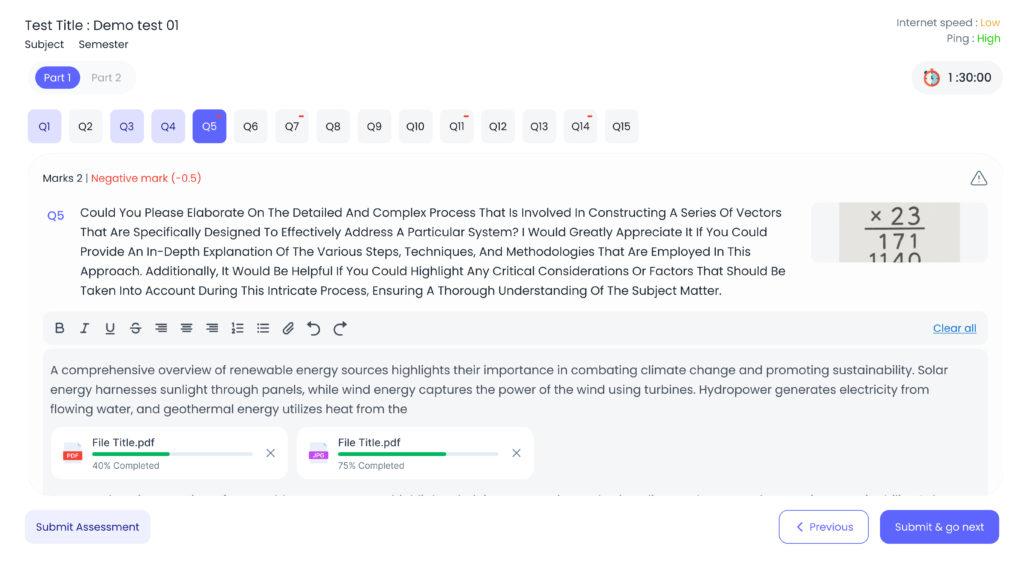

The evaluation process follows a clear academic flow:

- Students submit responses using text, diagrams, images, or equations, depending on the subject

- The system analyses responses to identify conceptual elements and alignment with rubric criteria

- Faculty receive structured rubrics with suggested evaluations

- Faculty review, refine, or override evaluations as needed

- Students receive rubric-based feedback highlighting strengths and areas for improvement

This approach preserves faculty autonomy while reducing repetitive effort.

The Role of Customised Rubrics in Learning

| Subjective Assessment Rubrics |

| Relevance |

| Content Accuracy |

| Coverage Depth |

| Clarity |

| Language Presentation |

Rubrics serve two purposes. For faculty, they provide a consistent framework for evaluation. For students, they clarify expectations and guide improvement.

When students understand how their responses are evaluated, feedback becomes actionable. Assessment shifts from being a one-time judgement to an opportunity for learning and reflection.

This clarity is especially important in subjective assessments, where expectations are often implicit rather than explicit.

Institutional Perspective

From an institutional standpoint, structured subjective assessment supports several objectives:

- Consistent evaluation standards across courses and sections

- Reduced dependency on individual grading styles

- Clear evidence of learning aligned with outcomes

- Stronger academic defensibility during audits and reviews

Institutions retain the depth of subjective evaluation while gaining reliability and transparency.

Preserving Academic Intent

Subjective assessments should continue to play a central role in higher education. They are essential for disciplines that value reasoning, explanation, and judgement.

The challenge is not whether to keep them, but how to support them effectively.

When embedded within Intelligent Learning Infrastructure, subjective assessments remain academically rigorous while becoming manageable at scale. Faculty focus on judgement and guidance, while the infrastructure supports consistency and insight.